The Pulse: Did capacity shortages turn Anthropic hostile to devs?

For the past few weeks, Anthropic has continually upset devs with its “dumber” model, and by removing Claude Code access from some paid accounts. Could the reason have been to conceal capacity issues?

Hi, this is Gergely with a free issue of the Pragmatic Engineer Newsletter. In every issue, I cover Big Tech and startups through the lens of senior engineers and engineering leaders. Today, we cover one out of five topics from last week’s The Pulse issue. Full subscribers received the article below seven days ago. If you’ve been forwarded this email, you can subscribe here.

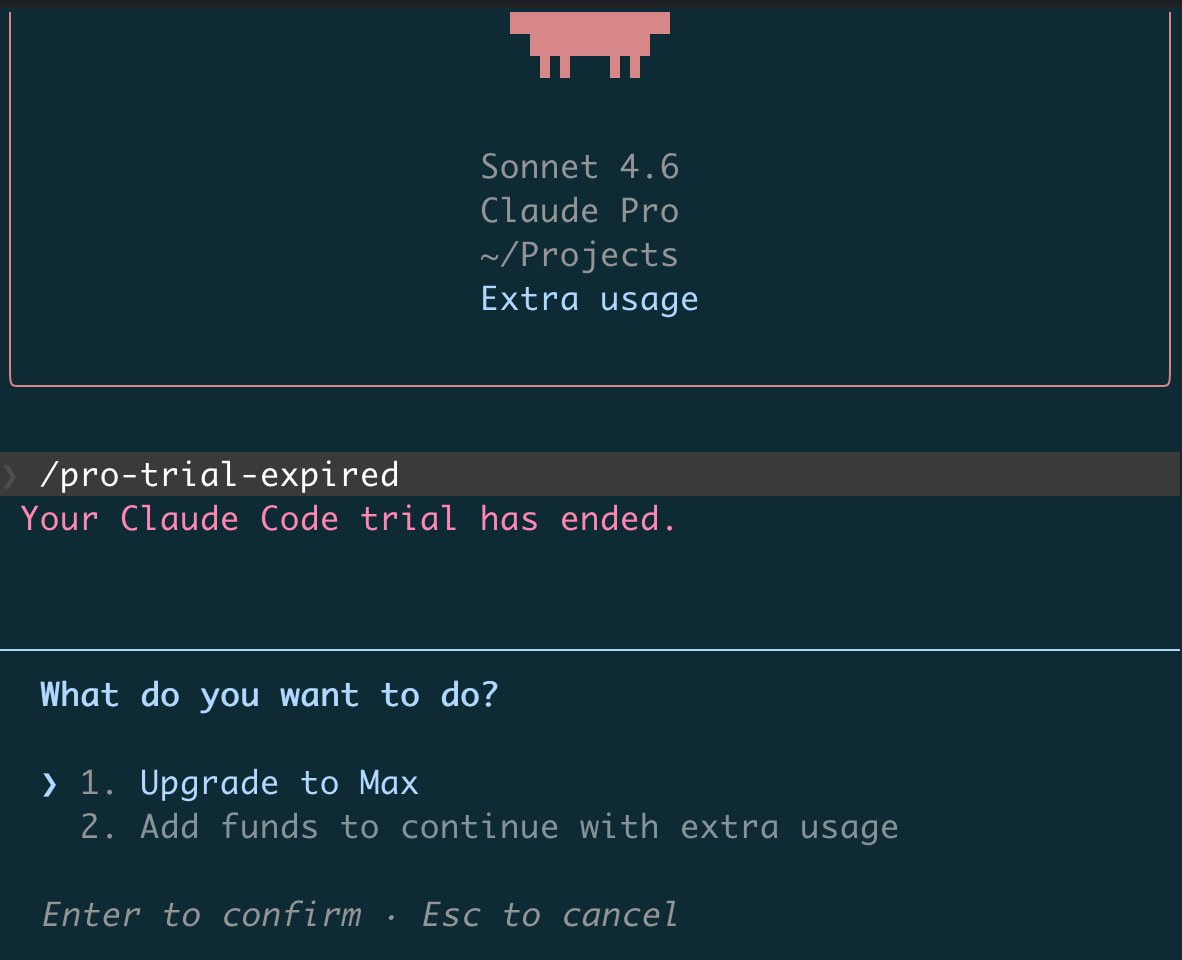

Last week, we reported on Anthropic seemingly being on a speed run to break devs’ goodwill by silently “nerfing” Claude Code, banning corporate accounts without warning, and a weird growth experiment involving revoking Claude Code and then restoring it. This week, a dev on the $20/month Pro plan had Claude Code removed just days into their subscription:

This week, Anthropic announced a big data center expansion, and relaxing previous usage limitations, while Elon Musk’s SpaceX / xAI ( a single company after a merger) is renting its complete Colossus 1 data center to Anthropic. From the announcement:

“Colossus 1 features over 220,000 NVIDIA GPUs, including dense deployments of H100, H200, and next-generation GB200 accelerators. The cluster delivers extreme parallel performance for large language models, multimodal systems, scientific simulations, and generative AI at frontier scale.

Anthropic plans to use this additional compute to directly improve capacity for Claude Pro and Claude Max subscribers.”

In parallel with this release, Anthropic announced:

Doubling Claude Code’s current 5-hour limits for Pro, Max, Team, and seat-based Enterprise plans

Removing peak hours limit reduction on Claude Code for Pro and Max plans

Substantially raising API rate limits for Opus models

Is it possible that capacity issues are what led Anthropic to make Claude worse? It’s confirmed the company has struggled with capacity for months. Conveniently, Claude Code being “nerfed” led to lower compute load, while removing Claude Code access from cheap plans could look like rate limiting. Even the banning of corporate accounts could be seen as scaling back at a time when the business has struggled to serve existing growth. Yesterday, (6 May), at the Code with Claude event hosted by Anthropic, CEO, Dario Amodei, said:

“We originally planned for 10x growth, and we’ve seen something more like 80x growth in revenue and usage over the last period of time.”

SpaceX / xAI renting a good chunk of its capacity to Anthropic is ironic, considering that xAI (Musk’s AI startup) builds Grok, a frontier model and direct rival of Claude, and also in January, Anthropic banned xAI developers from Claude. As covered at the time:

“It’s common for an AI lab to not allow another AI lab to use its model, like at OpenAI, Anthropic, and Google. On the other side, there’s also the pertinent question of why a leading AI lab would even want to use a rival for its own day-to-day work?

Turns out, xAI (Elon Musk’s AI lab) was relying on Cursor to write code, which we know because they got cut off.”

Anthropic likely banned xAI to stop Claude from being potentially distilled while it tried to improve Grok’s coding capability. Meanwhile, Musk called Anthropic “misanthropic and evil” earlier this year, and said the new tenant “hates Western civilization”. But both parties seem happy to put that behind them and strike a deal, so perhaps there’s something else at play.

Could SpaceX / xAI be checking out of the frontier-AI model wars? Leasing a good chunk of its data center capacity might suggest that. SpaceX / xAI has two data centers: Colossus 1 and Colossus 2. Colossus 1 represents somewhere around 45% of current SpaceX / xAI capacity, and 20-25% of planned total capacity.

Giving up as much capacity as this might indicate a lack of demand, or capacity sitting idle. It also means Grok is losing out in market share to Claude, ChatGPT, and other leading models. In February’s AI tooling survey we found scarce mention of Grok, which lagged in usage behind open models like DeepSeek and Qwen.

To be fair, unlike Anthropic and OpenAI, Grok never had a B2C nor B2B business that took off. The biggest consumer use case for Grok seems to be its integration into the social media platform, X; at least, I don’t know of any tech company using the model for serious work.

“The enemy of my enemy is my friend”, says the maxim, and if there’s one company Musk hates, it’s OpenAI. He is currently suing OpenAI, claiming it betrayed its founding nonprofit mission to develop safe AGI for humanity’s benefit by shifting to a profit-driven model backed by Microsoft. Musk also claims that despite investing about $40M, he has no ownership of the company.

He wants $150B in damages, the removal of Sam Altman and Greg Brockman, and for OpenAI to return to a full nonprofit, as per when he invested in the company. We covered more about OpenAI’s own ethical challenges between nonprofit and for-profit right after the firing of Sam Altman in 2023, in the deepdive What is OpenAI, really?

Similarly, Anthropic may well have an issue with OpenAI, if CEO Dario Amodei’s failure to join hands with Sam Altman while sharing a stage with the Prime Minister of India earlier this year is anything to go by.

Capacity issues hurting Anthropic would benefit OpenAI, and so by offering significant capacity to Anthropic, Musk is making it harder for OpenAI to win the market. That would be ironic, given he’s a former investor.

Read the full issue of last week’s The Pulse, or check out this week’s The Pulse. This week’s issue covers:

Forward deployed engineering heats up again. Massive demand for the role at Google, OpenAI, and Anthropic. The latest version of the FDE role looks like the consultant / solution architect role done by many early-junior engineers.

Why are layoffs spiking? Tech job cuts are higher than since early 2023 for various reasons: smaller teams prompt reorgs and reduce the need for middle management. Meanwhile, poorly performing companies make layoffs without the influence of AI.

New trend: self-reporting 100% AI generated code at Microsoft. With mid-year performance reviews looming, some managers advise their reports to claim they use AI for everything.

Industry Pulse. Tokenmaxxing at Amazon, too, SaaS companies grow faster than before – perhaps partly due to AI, Bun rewritten in Rust with AI works well, Anthropic overtakes OpenAI in enterprise spend, and more.

Vibe coding & agentic engineering get uncomfortably close. A relatable observation by software engineer, Simon Willison, about reviewing AI agents’ code less than would be ideal.