Are AI agents actually slowing us down?

As more software engineers use AI agents daily, there’s also more sloppy software, outages, quality issues, and even a slowdown in shipping velocity. What’s happening, and how do we solve it?

When it comes to AI agents and AI tooling, most of the discussion focuses on their potential boosts for efficiency, faster iteration, and the pushing out of more code, faster.

Last week, we took an inside look into how Uber is adopting AI, internally. The rideshare giant has built close to a dozen internal systems to deal with code generated by AI agents. However, when quantifying the impact of AI, the focus was on how much output has increased, and how devs who use more AI also generate more pull requests; these are the “power user” devs who generate 52% more PRs than devs who use AI less. There was no mention of product quality – at all!

And there are signs that product quality is dropping overall. Today, we dig into this under-discussed topic, covering:

Anthropic: degraded flagship website. An annoying UX issue irritated paying Claude customers – and no one at Anthropic noticed. The company moves very fast, generates 80%+ of production code with Claude, but quality and user experience seem to be taking a backseat.

Amazon: AI-agent reliance triggers SEVs. Amazon’s retail org has a leap in outages caused by its own AI agents. Now, senior sign off is needed for junior engineers’ AI-assisted changes.

Big Tech: “use AI or you’re unproductive.” Companies like Meta and Uber are tracking AI token usage in performance reviews, putting pressure on engineers to use it heavily — irrespective of the tools’ quality impact.

OpenCode: more time spent cleaning up. Dax Raad, OpenCode’s creator, warns that AI agents are lowering the bar for what ships, discouraging refactoring, and don’t speed teams up.

5. Startups: founders see LLMs slowing down long-term velocity. Sentry’s CTO and others observe that while AI removes the barrier to getting started, it also produces bloated, hard-to-maintain code that slows long-term development.

Research: AI agents underperform claims. Some studies show AI coding tools produce short-lived velocity gains followed by significant tech debt increases.

How do we solve it? Engineers with strong architectural sense become more critical than ever, proposed solutions include formal validation methods, and perhaps reviving some old school QA ideas.

1. Anthropic: degraded flagship website

This article’s genesis was last week, when I’d finally had enough of a persistent UX bug on Claude’s flagship website: the prompt I typed in regularly got lost. Below is a video of me typing “How can I…” – and “losing” the first two words when the page loaded:

It’s pretty straightforward:

The page starts to render and the textbox is displayed

The user starts to type their prompt, but the page has not finished loading subscription data

The subscription information loads around a second later

The textbox is reset and the typing is lost

This is a pretty basic bug you might expect in a prototype, except that this is the landing page of Claude.ai, and it’s a bug that impacts every paying customer – easily millions – every day. Even worse, the bug happens every time you visit the site.

Somehow, nobody at Anthropic tested the site to catch a plainly obvious bug which impacted 100% of paying customers. At the same time, no company uses AI coding tools more than Anthropic: around 80% of the company’s code is now generated by Claude Code, so we can assume a good part of the website is also created that way.

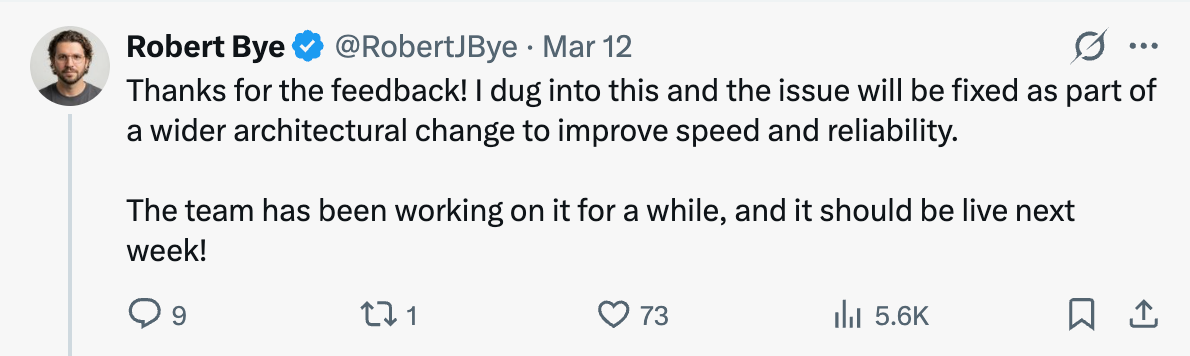

My complaint about Anthropic’s website being broken went a bit viral and got the attention of the developer team:

To their credit, three days later the bug was gone. There’s no longer a “double load” of the textbox: it takes a bit longer to load but only does so once.

Still, it makes me wonder how much longer this issue would’ve continued had nobody complained. Also, how many more bugs are present on the Claude website that nobody highlighted on social media? How many more features could be shipped in a state that is subpar for production-grade software with millions of paying customers?

Anthropic seems to be prioritizing moving very fast over doing so with high quality. There is no denying that the company is moving at incredible speed and running laps around competitors. A good example is how they built Claude Cowork in just 10 days. Claude Cowork handled work with Microsoft Word and Excel documents surprisingly well, to the point that it set off a “code red” inside Microsoft’s Office division, I understand.

Microsoft responded as fast as possible, but it still took 2-3 months to launch their (cloned) response, called Copilot Cowork earlier this month, with full access still to follow soon.

In the case of Anthropic, moving fast with okay quality seems to make good business sense: they build a better product than what already exists, so no matter if it’s a bit rough around the edges; they can fix quality issues post-launch and still be months ahead of the competition.

2. Amazon: reliance on AI agents causes SEVs

Anthropic can afford to move fast while it’s growing at an extremely high rate and expanding its market share rapidly. At the same time, established players like Amazon have extreme focus on reliability: AWS has become the top cloud provider not least by being extremely reliable (as well as aggressive on pricing).

Well, reliability at the online retailer seems to be getting worse, too, and the company’s AI agent, Kiro, could be causing SEVs (Amazon’s phrase for “outage”), according to The Financial Times (emphasis mine):

Amazon’s ecommerce business has summoned a large group of engineers to a meeting on Tuesday for a “deep dive” into a spate of outages, including incidents tied to the use of AI coding tools.

The online retail giant said there had been a “trend of incidents” in recent months, characterised by a “high blast radius” and “Gen-AI assisted changes” among other factors, according to a briefing note for the meeting seen by the FT.

Under “contributing factors” the note included “novel GenAI usage for which best practices and safeguards are not yet fully established”.

“Folks, as you likely know, the availability of the site and related infrastructure has not been good recently,” Dave Treadwell, a senior vice-president at the group, told employees in an email, also seen by the FT. (...)

He asked staff to attend the meeting, which is normally optional.

Junior and mid-level engineers require more senior engineers to sign off any AI-assisted changes, Treadwell added in the briefing note.”

This meeting was the regular “This Week in Stores Tech” operational one, but what was new was the note telling staff to attend this “optional” meeting, and the mandate for senior engineers to sign off code changes from juniors. The outages may have been caused by less experienced engineers over-trusting GenAI’s output. Also, there were incidents caused by AI changes, said the FT:

“Separately, the company’s cloud computing arm — Amazon Web Services — has suffered at least two incidents linked to the use of AI coding assistants, which the company has been actively rolling out to its staff.

AWS suffered a 13-hour interruption to a cost calculator used by customers in mid-December after engineers allowed the group’s Kiro AI coding tool to make certain changes, and the AI tool opted to “delete and recreate the environment”, the FT previously reported.”

Again, a tool causing an outage is not its own fault: it’s on the engineer who lets the tool run wild. If I delete two lines of code, then push it to production, and the server crashes, the fault is not with the text editor or the Git client, but with me who made the change. Similarly, if you prompt an AI agent to do something, and the AI agent goes off and does its stuff which causes an outage, then responsibility lies with the engineer who didn’t set up guardrails for the agent.

However, there is the issue that AI agents can wreak havoc in ways devs don’t quite understand or expect, until learning the hard way. This was what took down a lesser-used AWS service, according to the report:

“Amazon Web Services experienced a 13-hour interruption to one system used by its customers in mid-December [2025] after engineers allowed its Kiro AI coding tool to make certain changes, according to four people familiar with the matter.

The people said the agentic tool, which can take autonomous actions on behalf of users, determined that the best course of action was to “delete and recreate the environment”.

It sounds like an engineer gave overly broad permissions to the coding agent which then used its scope to delete a service. As mentioned, the engineer is responsible, but there is also a learning curve with these AI agents to consider: it’s not like this type of outage happened in the past. Plus, companies like Amazon are heavily incentivizing using AI agents for as much work as possible, which naturally leads to overuse.

3. Big Tech: “use AI or you’re unproductive”

Something happens at places that measure devs’ AI usage: pressure builds for all devs to use more AI or else be seen unproductive and at risk of poor performance reviews, potentially leading to a PIP or worse.

Meta is taking token usage into account during perf reviews. A current engineering manager at the social media giant told me that the token usage of each engineer is now a data point — one of many! — for performance calibrations. By itself, it is not a positive or negative signal, but someone perceived as having low impact and with low token usage is now seen as a blatant low performer. For high performers with outstanding impact, very high token usage is seen as a good thing as it conveys to the manager group that they’re personally invested in AI and are improving their workflow – as proved by results.

We previously covered how performance calibrations work at places like Meta.

Big Tech CEOs are starting to see AI “power user devs” as superior to their coworkers. Uber is a good example: the Dev Platform team started to analyze the output of engineers by whether or not they’re in the “power user” category, meaning they use AI agents at least 20 days per month. They found more PR output by engineers who are power users. So far, that’s useful data, but it’s just one piece of information, and doesn’t reveal the quality of the PRs, the impact of the engineer, or any other business outcome.

By the time this data reaches CEO level, it has turned into something else. Here’s Uber CEO, Dara Khosrowshahi, interpreting the same data points on the Diary of a CEO podcast (emphasis mine:)

“While 90% of our engineers are using AI tools of some sort, there’s about 30% of them that are using them at a completely accelerated pace. And it [using AI tools heavily] really is changing their productivity in a way that I’ve never ever seen before.”

There’s a step from observing more PRs per engineer, to judging power users as being more productive for that reason. Dara continues:

“I can imagine maybe 5 years from now, as the engineers get more and more productive, that I may not decide to add engineering headcount because at that point instead of adding an engineer, I should add agents and buy some more GPUs from Nvidia. That may be the investment in the future.”

Unsaid in the above is that by that time, only engineers “using AI at a completely accelerated pace” would be employed. Would it also mean that engineers not on the bandwagon are on the way out? I appreciate Dara speaking his mind and shedding light on the thought process of a Big Tech CEO.

Inside large tech companies, it’s becoming a career risk to not use AI at an accelerated pace, regardless of output quality. These large companies are the ones likely to be mulling layoffs, like Meta reportedly preparing to cut up to 20% of staff. And when it comes to identifying redundancies, it’s a fair assumption that things like “AI usage” and “pull requests per engineer” will be taken into account, especially as one theme of such layoffs will almost certainly be that the employer wants to focus more on AI.

So, it’s common sense (and self-preservation) to use more AI, if only not to be seen as unproductive. Their perceived output will rise and engineering leadership will share more reports about productivity being up, and interpreting more code generated and more pull requests as the proof.

4. OpenCode: “more time spent cleaning up”

Dax Raad is founder and CEO of OpenCode, an open source AI coding agent, into which you can plug in models like Claude, ChatGPT, Gemini, and others. It’s an increasingly popular alternative to the likes of Claude Code and Codex. In our recent AI tooling survey, it came up as a tool used nearly as much as Google’s Gemini CLI and Antigravity. A small team works on this increasingly influential tool, and is seeing problems with AI overuse. Dax wrote this note to the OpenCode team (emphasis mine):